How DataForSEO Ensures API Reliability and 99.95% Uptime

Numerous SEO tools and SEO specialists rely on APIs to streamline data collection. Yet, how does one know if an API is reliable? Uptime is probably the first thing that comes to mind, and for a good reason. When your business processes and revenue depend on service availability, you simply can’t overlook this characteristic.

Still, uptime is a one-dimensional view of reliability. What about security, turnaround time, and data accuracy?

In this article, we’ll explore these and more factors to give you a broader perspective on the reliability of SEO APIs. We’ll also explain how we’re keeping DataForSEO APIs steadfast.

Contents:

Designing SEO API architecture to secure 99.95% uptime

Implementing 24/7 monitoring and support at DataForSEO

Minimizing DataForSEO API turnaround time

Ensuring high-level security at DataForSEO

Maintaining SERP data accuracy with DataForSEO AI

Perfecting API documentation for better user experience

Designing SEO API architecture to secure 99.95% uptime

First and foremost, an API cannot be effective and reliable without consistent and strong uptime. To establish a quality service for our customers, DataForSEO has designed its API architecture for high availability from the very beginning. In this part, we’ll tell you about the solutions we’re using to ensure 99.95% uptime.

Basically, our system is grounded on the principle of redundancy. In other words, there’s always a spare component to immediately replace a component that’s failed or requires maintenance. By this logic, no single collapsed component will crush the entire system. Below, we’ll give you a few examples of how we’re implementing this principle.

First off, we have a cluster architecture. Clustering refers to connecting a number of servers into a group that functions as a single server. So, if one of the servers in a cluster fails, another server from the cluster will kick into gear.

Besides that, the clustering methodology allows us to easily spin up more server nodes whenever we need to scale. In this way, we can keep the performance high when the number of requests to our APIs surges.

In addition to using clusters, we’re keeping our system distributed across several data centers. The multi-data center strategy keeps the DataForSEO system live even if a whole data center in one location experiences an outage. In that case, your requests are redirected to a working data center.

Similarly, we have geo-distributed data storage synchronized across all clusters and nodes. Because the data is stored independently and has replications, we can ensure high availability and security.

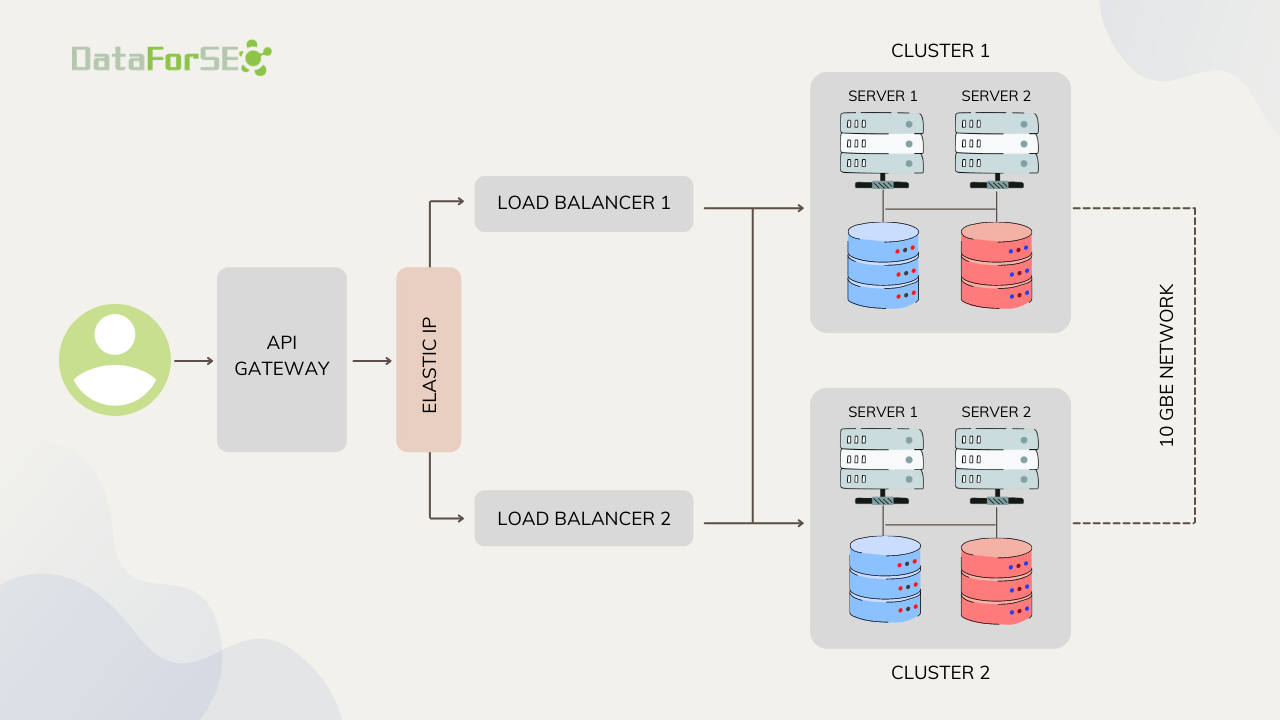

To illustrate our architecture, we can create a simplified scheme of the DataForSEO system. As you can see, there are a few components we haven’t mentioned above. From left to right: an API gateway helps us with API management and security, elastic IP allows for rapid IP remapping between load balancers which in turn distribute tasks across the clusters.

By all means, many details of the architecture and logical routing are specific to the API or endpoint in question, and parameters that are applied to a request. For example, if it’s SERP API, the underpinning system gets more complex and includes more servers, a sophisticated IP rotation algorithm, and a robust web crawling engine.

Still, every piece of our hardware and software is designed based on the same principle of redundancy. Relying on this logic, we’ve been able to achieve a guaranteed 99.95% uptime.

Implementing 24/7 monitoring and support at DataForSEO

Sure enough, there may be situations when DataForSEO APIs cannot process some requests due to reasons beyond our control.

Essentially, 99.95% uptime translates to 0.05% or 4 hr 22 min 58 sec of probable downtime per year. This time is the maximum possible duration of API unavailability, but it may last for a much shorter timespan in sum per year or may not happen at all.

Throughout the years we’ve been in business, we’ve invested a lot of time and effort to minimize the possibility and duration of downtime. Besides building resilient infrastructure, we’ve also developed an internal analytics system running 24/7.

First off, our system monitors the operation of external data sources we use and allows our development team to make forecasts about possible issues or outages. Second off, this system tracks the operation of our infrastructure. Lastly, it alerts all responsible developers whenever something goes down so they can bring it up in the shortest possible time.

To sum up, 99.95% uptime is a very reputable and strong benchmark, but we’re working on further enhancements to our system.

On a final note, we never charge for requests that failed due to our system error. If you notice any malfunctioning, you can always contact our 24/7 support using a live chat on our website. The support agent will reach an on-call developer, and the issue will be fixed promptly.

We’ve implemented round-the-clock customer support from day one. You can learn more about the support team at DataforSEO in this article.

Minimizing DataForSEO API turnaround time

Besides system availability, turnaround time is another critical metric that contributes to the reliability of an SEO API. In this part, we will explain how we manage to minimize the turnaround time at DataForSEO, and how we measure it.

But, first off, it is important to note the difference between latency and turnaround time.

Latency is counted from the moment when a POST request is sent to the moment when an HTTP status code is received in response.

Turnaround time is measured from the moment once a task is set to the moment it is completed.

At DataForSEO, the latency of our APIs is kept under milliseconds. The whole system functions without operational delays because all servers are connected to a single 10GbE network.

But, as you understand, turnaround time is a more important metric because it shows how fast you can get the results once you have sent a request.

With DataForSEO APIs, you can expect rapid turnaround times thanks to clustering and geo-distributed architecture, as the system load is spread and balanced. Yet, unlike latency, turnaround time varies by API.

The table below shows the average turnaround time for different DataForSEO APIs and queue priorities.

| API | Live tasks | Standard queue | Priority queue |

| SERP API, Merchant API, Business Data API | up to 11 seconds | 5 minutes* | up to 1 minute |

| Keyword Data API | up to 7 seconds | 5 minutes* | — |

| Backlinks API, DataForSEO Labs API, Domain Analytics API, Content Generation API, | up to 2 seconds | — | — |

| App Data API | up to 2 seconds | up to 45 minutes | up to 1 minute |

| On-Page API, Content Analysis API | No average, the value depends on the scope and complexity of a crawl/analysis | ||

*the guaranteed turnaround time is 45 minutes.

As you can see, some APIs need a bit more time for fetching and processing data.

In particular, APIs that are working based on our scraping solutions have a slightly slower turnaround time for live tasks than Keyword Data API that are based on external datasources (Google Ads and Bing Ads). At the same time, Backlinks API and DataForSEO Labs API are only reaching our database to get the data, that’s why they deliver the results the fastest.

As for On-Page API, it is a customizable crawling engine, and therefore the speed of each crawl is determined by the parameters applied for processing and the number of website pages to scan. For example, if you scan one page using the Instant Pages endpoint, the task should be completed in around the same amount of time as a live SERP API task. The same goes for Content Analysis API which works based on the Live method.

Overall, the turnaround time of our APIs is faster than the industry average. We value our reputation, so you can rest assured that DataForSEO will always deliver your data as fast as promised.

Ensuring high-level security at DataForSEO

While APIs are very commonly used, pulling information from an outside source may involve certain risks, such as data breaches. That is why API security is a major factor that shouldn’t be overlooked when selecting your API provider.

Reputable data vendors apply various security techniques to address vulnerabilities and keep their APIs and customers protected from all kinds of related threats.

At DataForSEO, we’ve always been following security best practices with reasonable care. We regularly test our system shield and have a team responsible for maintaining security across DataForSEO infrastructure and APIs.

To begin with, we are employing OAuth 2.0 for logging into the DataForSEO Dashboard (GUI for API account management). OAuth 2.0. is a leading standard for authorization that allows users to create an account with credentials from another system (e.g., Google or Github). Basically, OAuth 2.0 protocol protects the user as their credentials don’t get disclosed, and the API provider only receives tokens.

Besides that, we’re using an API gateway which is a proven way to secure the interactions between API and the client and API and the backend. DataForSEO is also implementing an API firewall, TLS encryption, and data validation, which are all solid mitigation mechanisms for different types of attacks.

To prevent denial-of-service attacks, in particular, we apply rate limits. The standard limit is 2000 API calls per minute. Sure enough, we can apply higher limits, however, a customer should contact us to request this option. In this way, we keep DataForSEO API availability and performance protected from the threat of random surges in the number of API calls.

Last but not least, we have received an ISO/IEC 27001:2013 certification for the DataForSEO information security management system. Nick Chernets, CEO at DataForSEO, points out: “DataForSEO has always been committed to ensuring streamlined and secure data experiences for everyone. This certification allowed us to attest to this commitment, and validate our effort.”

You can read more about our certification following this link.

Maintaining SERP data accuracy with DataForSEO AI

Data accuracy is a vital component of data quality and is one of the characteristics that make a reliable data provider stand out.

From an SEO API provider’s perspective, the biggest challenge is maintaining the accuracy of data collected from search engine results. Point is, SERP is in constant flux, and this is true not only about rankings, ads, and the emergence of new elements and algorithms. The HTML markup of results pages also frequently shifts.

To ensure the highest level of accuracy at DataForSEO, we’re using a proprietary AI solution. It detects SERP markup changes, and uses machine learning to analyze SERP data, and identify patterns.

In particular, whenever there’s a meaningful shift in a markup of an existing element, the DataForSEO AI flags it and sends a notification to our development team. In this way, we can quickly review new structures and adjust our scraping models.

Besides that, DataForSEO AI allows us to swiftly find and recognize new SERP elements. Once a new SERP feature is found, we can add it to our models, prepare the documentation, and release it faster to ensure consistency and accuracy.

Perfecting API documentation for better user experience

When you have the possibility to try out an API before integrating it, detailed documentation will certainly help you to get started smoothly and quickly. Even after you test and deploy an API, documentation remains an important source you can rely on for instructions. For example, it will help you to implement new API features, handle errors properly, and many more.

To make it easier for you to learn and use DataForSEO APIs, we’re keeping them completely packaged with API overviews, field descriptions, error lists, code examples, and migration guides when necessary. You can find it all here.

DataForSEO documentation is human-written, and before any new parameter or endpoint gets published, it is double-checked by a responsible developer. We also keep our docs and the actual code in sync and notify our customers in case of any major changes that affect the structure of API requests or responses.

Lastly, DataForSEO continuously improves the documentation to enhance API usability and always values customer feedback. For example, if you consider something difficult to understand, you can simply drop a line to our customer support. We’ll look for a better explanation, and add it to our docs.

Here at DataForSEO, we’re committed to keeping our customers happy and our APIs – well-documented and easy to use. Whether you’re an SEO specialist or a developer, DataForSEO API docs will equip you with everything you need to seamlessly obtain data at any stage of your journey with us.

Conslusion

In the end, it’s safe to say that high uptime is a crucial factor when it comes to evaluating API reliability. However, it’s not the only one to consider. Here’s a list of characteristics that make for a reliable API:

- Consistent and strong uptime

- 24/7 monitoring and support

- Fast turnaround time

- High-level security

- Accurate SERP data

- Well-written API docs

Luckily, DataForSEO can offer you all of the above. But don’t take our word for it, start your free unlimited trial now and see for yourself.

P.S. You can test our APIs right in the DataForSEO Dashboard using our API playground.